Merge Takes lets them combine multiple Lip Sync or Trigger takes into a single row. Using the new Set Rest Pose option, users can animate back to the default position when they recalibrate, so they can use it during a live performance without causing their character to jump abruptly. Pin Feet When Standing complements Limb IK it’s a new option that keeps characters’ feet grounded when they’re not walking, leading to more realistic mid-body poses, like squats. It controls the bend directions and stretching of legs as well as arms, allowing artists to pin hands in place while moving the rest of the body. Limb IK, previously Arm IK, is in tow with the new Character Animator. The latest release of Character Animator’s lip-sync engine - Lip Sync - improves automatic lip-syncing and the timing of mouth shapes called “visemes.” Both viseme detection and audio-based muting settings can be adjusted via the settings menu, where users can also fall back to an earlier engine iteration.

And stop-motion animation studios like Laika are employing AI to automatically remove seam lines in frames. Pixar is experimenting with AI and general adversarial networks to produce high-resolution animation content, while Disney recently detailed in a technical paper a system that creates storyboard animations from scripts. Several - including Speech-Aware Animation and Lip Sync - are powered by Sensei, Adobe’s cross-platform machine learning technology, and leverage algorithms to generate animation from recorded speech and align mouth movements for speaking parts.ĪI is becoming increasingly central to film and television production, particularly as the pandemic necessitates resource-constrained remote work arrangements. Learn MoreĪdobe today announced the beta launch of new features for Adobe Character Animator (version 3.4), its desktop software that combines live motion-capture with a recording system to control 2D puppets drawn in Photoshop or Illustrator. May receive compensation from your actions through such links.Join top executives in San Francisco on July 11-12, to hear how leaders are integrating and optimizing AI investments for success. Follow post may contain links to products or services with which I have an affiliate relationship and.Subscribe on YouTube for video reviews, Q&A, and more.Join the Facebook Page and watch live podcasting Q&A on Mondays at 2pm (ET).Subscribe to The Audacity to Podcast on Apple Podcasts or.Ask your questions or share your feedback To help you launch or improve your podcast. Request a consultant here and I'll connect you with someone I trust I no longer offer one-on-one consulting outside of Podcasters' Society, but Or, you can launch it directly from your applications.Īdobe Character Animator is currently in public preview mode (in other words, beta) and will eventually be part of Adobe Creative Cloud. Such as recording facial animations, then lip-syncing, then body, and so on.Īdobe Character Animator installs automatically with Adobe After Effects and can be accessed through the After Effects “File” menu. Thus, you can layer your animations over each other. Instead of getting everything right the first time, Adobe Character Animator lets you target specific pieces for recording. Specific positions or animations can even be assigned to keyboard shortcuts to make it easy for you to animate live. When you want to animate limbs, it's easy to make a bone structure that lets you move those parts with a mouse or other control. Once recorded, you have full control over the animations to tweak as you wish.

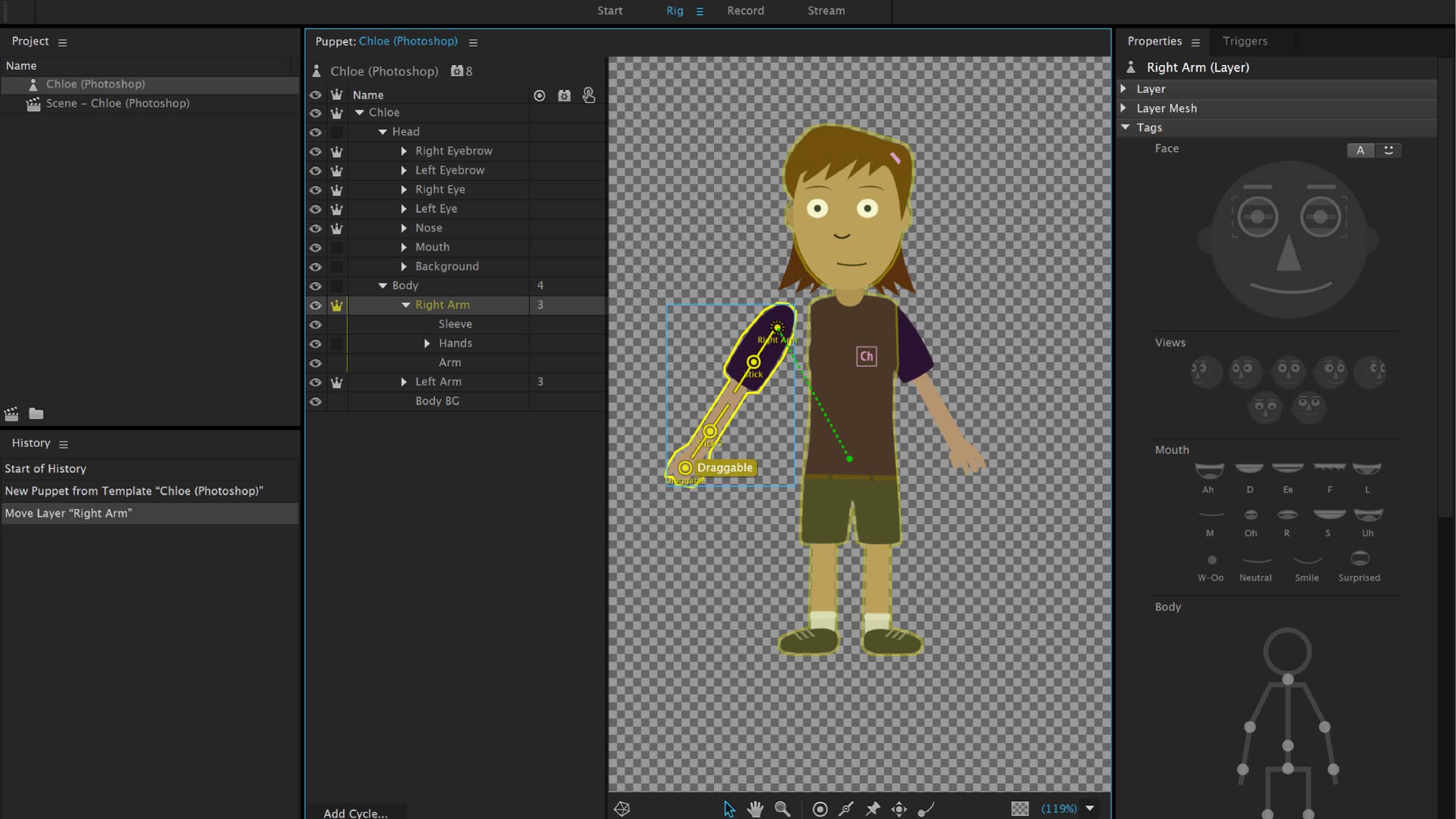

Plus, a webcam (or recorded video) can be used to animate eye, eyebrow, head, and more movements. Where Character Animator gets really easy is that it can listen to a real-time or recorded voice and animator the mouth automatically. Each character would need different mouth and eye images, then Character Animator can automate the animations beyond that. The easiest way to get started with Adobe Character Animator would be to look at the samples in the welcome screen, as well as the video tutorials. You can either name the parts of your character for Character Animator to automatically recognize, or you can tag the parts as necessary. Adobe Character Animator will make that easy!Īt NAB Show 2016, Adobe previewed great features coming soon to the prerelease Adobe Character Animator, packaged with After Effects.Ĭharacter Animator imports layers from a Photoshop or Illustrator file to become designated parts of a character. Podcast (video): Play in new window | DownloadĬharacter animation can be an engaging way to make video promos, explainers, or podcast teasers.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed